- Docs Home

- About TiDB Cloud

- Get Started

- Develop Applications

- Overview

- Quick Start

- Build a TiDB Developer Cluster

- CRUD SQL in TiDB

- Build a Simple CRUD App with TiDB

- Example Applications

- Connect to TiDB

- Design Database Schema

- Write Data

- Read Data

- Transaction

- Optimize

- Troubleshoot

- Reference

- Cloud Native Development Environment

- Manage Cluster

- Plan Your Cluster

- Create a TiDB Cluster

- Connect to Your TiDB Cluster

- Set Up VPC Peering Connections

- Use an HTAP Cluster with TiFlash

- Scale a TiDB Cluster

- Upgrade a TiDB Cluster

- Delete a TiDB Cluster

- Use TiDB Cloud API (Beta)

- Migrate Data

- Import Sample Data

- Migrate Data into TiDB

- Configure Amazon S3 Access and GCS Access

- Migrate from MySQL-Compatible Databases

- Migrate Incremental Data from MySQL-Compatible Databases

- Migrate from Amazon Aurora MySQL in Bulk

- Import or Migrate from Amazon S3 or GCS to TiDB Cloud

- Import CSV Files from Amazon S3 or GCS into TiDB Cloud

- Import Apache Parquet Files from Amazon S3 or GCS into TiDB Cloud

- Troubleshoot Access Denied Errors during Data Import from Amazon S3

- Export Data from TiDB

- Back Up and Restore

- Monitor and Alert

- Overview

- Built-in Monitoring

- Built-in Alerting

- Third-Party Monitoring Integrations

- Tune Performance

- Overview

- Analyze Performance

- SQL Tuning

- Overview

- Understanding the Query Execution Plan

- SQL Optimization Process

- Overview

- Logic Optimization

- Physical Optimization

- Prepare Execution Plan Cache

- Control Execution Plans

- TiKV Follower Read

- Coprocessor Cache

- Garbage Collection (GC)

- Tune TiFlash performance

- Manage User Access

- Billing

- Reference

- TiDB Cluster Architecture

- TiDB Cloud Cluster Limits and Quotas

- TiDB Limitations

- SQL

- Explore SQL with TiDB

- SQL Language Structure and Syntax

- SQL Statements

ADD COLUMNADD INDEXADMINADMIN CANCEL DDLADMIN CHECKSUM TABLEADMIN CHECK [TABLE|INDEX]ADMIN SHOW DDL [JOBS|QUERIES]ALTER DATABASEALTER INDEXALTER TABLEALTER TABLE COMPACTALTER USERANALYZE TABLEBATCHBEGINCHANGE COLUMNCOMMITCHANGE DRAINERCHANGE PUMPCREATE [GLOBAL|SESSION] BINDINGCREATE DATABASECREATE INDEXCREATE ROLECREATE SEQUENCECREATE TABLE LIKECREATE TABLECREATE USERCREATE VIEWDEALLOCATEDELETEDESCDESCRIBEDODROP [GLOBAL|SESSION] BINDINGDROP COLUMNDROP DATABASEDROP INDEXDROP ROLEDROP SEQUENCEDROP STATSDROP TABLEDROP USERDROP VIEWEXECUTEEXPLAIN ANALYZEEXPLAINFLASHBACK TABLEFLUSH PRIVILEGESFLUSH STATUSFLUSH TABLESGRANT <privileges>GRANT <role>INSERTKILL [TIDB]MODIFY COLUMNPREPARERECOVER TABLERENAME INDEXRENAME TABLEREPLACEREVOKE <privileges>REVOKE <role>ROLLBACKSELECTSET DEFAULT ROLESET [NAMES|CHARACTER SET]SET PASSWORDSET ROLESET TRANSACTIONSET [GLOBAL|SESSION] <variable>SHOW ANALYZE STATUSSHOW [GLOBAL|SESSION] BINDINGSSHOW BUILTINSSHOW CHARACTER SETSHOW COLLATIONSHOW [FULL] COLUMNS FROMSHOW CREATE SEQUENCESHOW CREATE TABLESHOW CREATE USERSHOW DATABASESSHOW DRAINER STATUSSHOW ENGINESSHOW ERRORSSHOW [FULL] FIELDS FROMSHOW GRANTSSHOW INDEX [FROM|IN]SHOW INDEXES [FROM|IN]SHOW KEYS [FROM|IN]SHOW MASTER STATUSSHOW PLUGINSSHOW PRIVILEGESSHOW [FULL] PROCESSSLISTSHOW PROFILESSHOW PUMP STATUSSHOW SCHEMASSHOW STATS_HEALTHYSHOW STATS_HISTOGRAMSSHOW STATS_METASHOW STATUSSHOW TABLE NEXT_ROW_IDSHOW TABLE REGIONSSHOW TABLE STATUSSHOW [FULL] TABLESSHOW [GLOBAL|SESSION] VARIABLESSHOW WARNINGSSHUTDOWNSPLIT REGIONSTART TRANSACTIONTABLETRACETRUNCATEUPDATEUSEWITH

- Data Types

- Functions and Operators

- Overview

- Type Conversion in Expression Evaluation

- Operators

- Control Flow Functions

- String Functions

- Numeric Functions and Operators

- Date and Time Functions

- Bit Functions and Operators

- Cast Functions and Operators

- Encryption and Compression Functions

- Locking Functions

- Information Functions

- JSON Functions

- Aggregate (GROUP BY) Functions

- Window Functions

- Miscellaneous Functions

- Precision Math

- Set Operations

- List of Expressions for Pushdown

- TiDB Specific Functions

- Clustered Indexes

- Constraints

- Generated Columns

- SQL Mode

- Table Attributes

- Transactions

- Views

- Partitioning

- Temporary Tables

- Cached Tables

- Character Set and Collation

- Read Historical Data

- System Tables

mysql- INFORMATION_SCHEMA

- Overview

ANALYZE_STATUSCLIENT_ERRORS_SUMMARY_BY_HOSTCLIENT_ERRORS_SUMMARY_BY_USERCLIENT_ERRORS_SUMMARY_GLOBALCHARACTER_SETSCLUSTER_INFOCOLLATIONSCOLLATION_CHARACTER_SET_APPLICABILITYCOLUMNSDATA_LOCK_WAITSDDL_JOBSDEADLOCKSENGINESKEY_COLUMN_USAGEPARTITIONSPROCESSLISTREFERENTIAL_CONSTRAINTSSCHEMATASEQUENCESSESSION_VARIABLESSLOW_QUERYSTATISTICSTABLESTABLE_CONSTRAINTSTABLE_STORAGE_STATSTIDB_HOT_REGIONS_HISTORYTIDB_INDEXESTIDB_SERVERS_INFOTIDB_TRXTIFLASH_REPLICATIKV_REGION_PEERSTIKV_REGION_STATUSTIKV_STORE_STATUSUSER_PRIVILEGESVIEWS

- System Variables

- API Reference

- Storage Engines

- Dumpling

- Table Filter

- Troubleshoot Inconsistency Between Data and Indexes

- FAQs

- Release Notes

- Support

- Glossary

Transaction Restraints

This document briefly introduces the transaction restraints in TiDB.

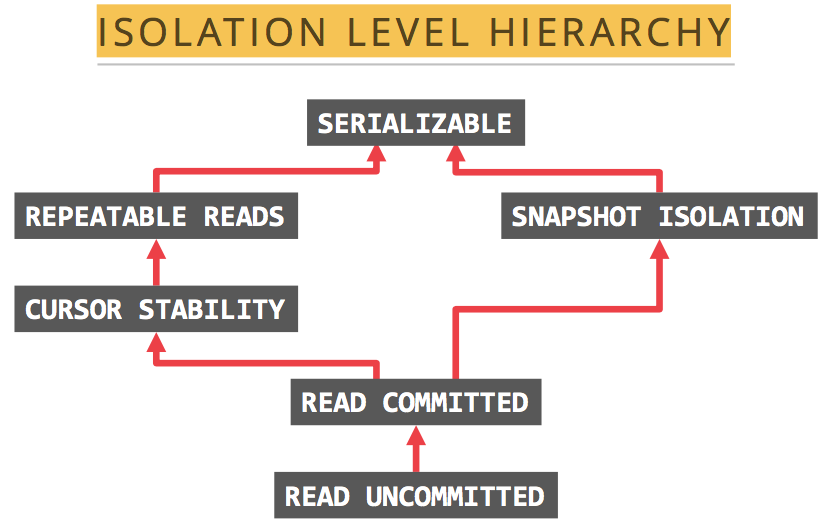

Isolation levels

The isolation levels supported by TiDB are RC (Read Committed) and SI (Snapshot Isolation), where SI is basically equivalent to the RR (Repeatable Read) isolation level.

Snapshot Isolation can avoid phantom reads

The SI isolation level of TiDB can avoid Phantom Reads, but the RR in ANSI/ISO SQL standard cannot.

The following two examples show what phantom reads is.

Example 1: Transaction A first gets

nrows according to the query, and then Transaction B changesmrows other than thesenrows or addsmrows that match the query of Transaction A. When Transaction A runs the query again, it finds that there aren+mrows that match the condition. It is like a phantom, so it is called a phantom read.Example 2: Admin A changes the grades of all students in the database from specific scores to ABCDE grades, but Admin B inserts a record with a specific score at this time. When Admin A finishes changing and finds that there is still a record (the one inserted by Admin B) that has not been changed yet. That is a phantom read.

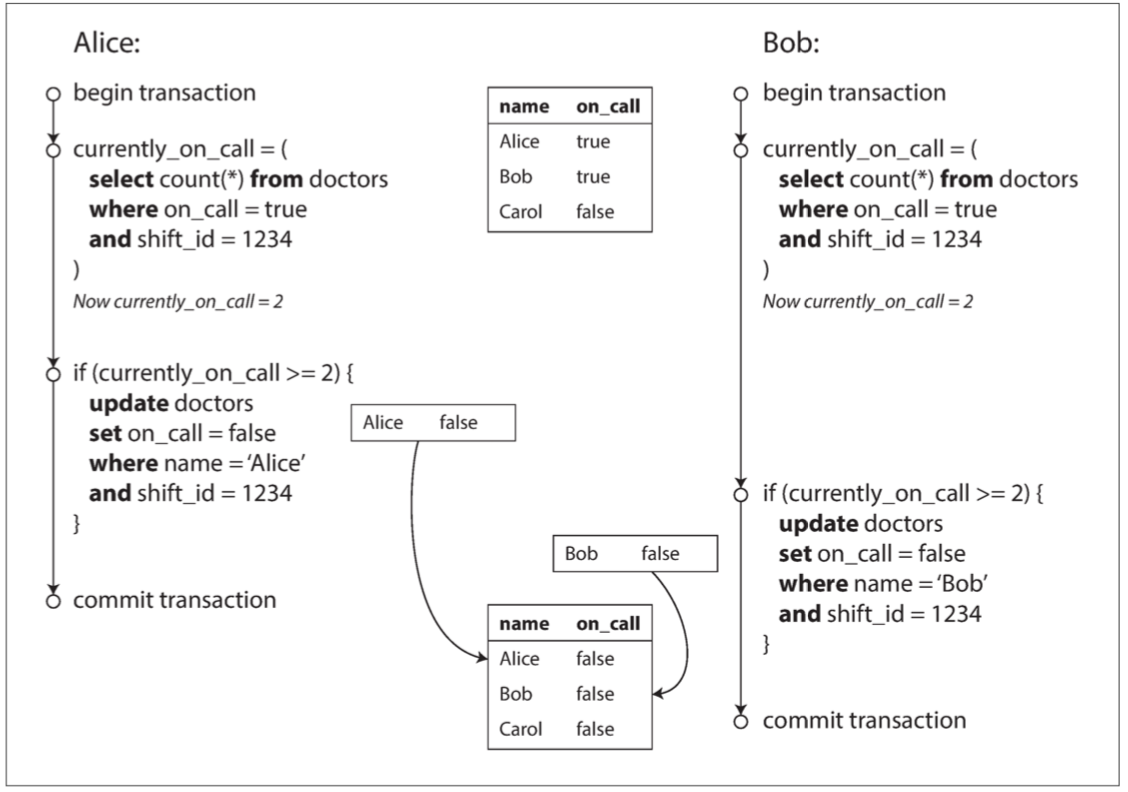

SI cannot avoid write skew

TiDB's SI isolation level cannot avoid write skew exceptions. You can use the SELECT FOR UPDATE syntax to avoid write skew exceptions.

A write skew exception occurs when two concurrent transactions read different but related records, and then each transaction updates the data it reads and eventually commits the transaction. If there is a constraint between these related records that cannot be modified concurrently by multiple transactions, then the end result will violate the constraint.

For example, suppose you are writing a doctor shift management program for a hospital. Hospitals typically require several doctors to be on call at the same time, but the minimum requirement is that at least one doctor is on call. Doctors can drop their shifts (for example, if they are feeling sick) as long as at least one doctor is on call during that shift.

Now there is a situation where doctors Alice and Bob are on call. Both are feeling sick, so they decide to take sick leave. They happen to click the button at the same time. Let's simulate this process with the following program:

- Java

- Golang

package com.pingcap.txn.write.skew;

import com.zaxxer.hikari.HikariDataSource;

import java.sql.Connection;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

import java.sql.SQLException;

import java.util.concurrent.CountDownLatch;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

import java.util.concurrent.Semaphore;

public class EffectWriteSkew {

public static void main(String[] args) throws SQLException, InterruptedException {

HikariDataSource ds = new HikariDataSource();

ds.setJdbcUrl("jdbc:mysql://localhost:4000/test?useServerPrepStmts=true&cachePrepStmts=true");

ds.setUsername("root");

// prepare data

Connection connection = ds.getConnection();

createDoctorTable(connection);

createDoctor(connection, 1, "Alice", true, 123);

createDoctor(connection, 2, "Bob", true, 123);

createDoctor(connection, 3, "Carol", false, 123);

Semaphore txn1Pass = new Semaphore(0);

CountDownLatch countDownLatch = new CountDownLatch(2);

ExecutorService threadPool = Executors.newFixedThreadPool(2);

threadPool.execute(() -> {

askForLeave(ds, txn1Pass, 1, 1);

countDownLatch.countDown();

});

threadPool.execute(() -> {

askForLeave(ds, txn1Pass, 2, 2);

countDownLatch.countDown();

});

countDownLatch.await();

}

public static void createDoctorTable(Connection connection) throws SQLException {

connection.createStatement().executeUpdate("CREATE TABLE `doctors` (" +

" `id` int(11) NOT NULL," +

" `name` varchar(255) DEFAULT NULL," +

" `on_call` tinyint(1) DEFAULT NULL," +

" `shift_id` int(11) DEFAULT NULL," +

" PRIMARY KEY (`id`)," +

" KEY `idx_shift_id` (`shift_id`)" +

" ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin");

}

public static void createDoctor(Connection connection, Integer id, String name, Boolean onCall, Integer shiftID) throws SQLException {

PreparedStatement insert = connection.prepareStatement(

"INSERT INTO `doctors` (`id`, `name`, `on_call`, `shift_id`) VALUES (?, ?, ?, ?)");

insert.setInt(1, id);

insert.setString(2, name);

insert.setBoolean(3, onCall);

insert.setInt(4, shiftID);

insert.executeUpdate();

}

public static void askForLeave(HikariDataSource ds, Semaphore txn1Pass, Integer txnID, Integer doctorID) {

try(Connection connection = ds.getConnection()) {

try {

connection.setAutoCommit(false);

String comment = txnID == 2 ? " " : "" + "/* txn #{txn_id} */ ";

connection.createStatement().executeUpdate(comment + "BEGIN");

// Txn 1 should be waiting for txn 2 done

if (txnID == 1) {

txn1Pass.acquire();

}

PreparedStatement currentOnCallQuery = connection.prepareStatement(comment +

"SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = ? AND `shift_id` = ?");

currentOnCallQuery.setBoolean(1, true);

currentOnCallQuery.setInt(2, 123);

ResultSet res = currentOnCallQuery.executeQuery();

if (!res.next()) {

throw new RuntimeException("error query");

} else {

int count = res.getInt("count");

if (count >= 2) {

// If current on-call doctor has 2 or more, this doctor can leave

PreparedStatement insert = connection.prepareStatement( comment +

"UPDATE `doctors` SET `on_call` = ? WHERE `id` = ? AND `shift_id` = ?");

insert.setBoolean(1, false);

insert.setInt(2, doctorID);

insert.setInt(3, 123);

insert.executeUpdate();

connection.commit();

} else {

throw new RuntimeException("At least one doctor is on call");

}

}

// Txn 2 done, let txn 1 run again

if (txnID == 2) {

txn1Pass.release();

}

} catch (Exception e) {

// If got any error, you should roll back, data is priceless

connection.rollback();

e.printStackTrace();

}

} catch (SQLException e) {

e.printStackTrace();

}

}

}

To adapt TiDB transactions, write a util according to the following code:

package main

import (

"database/sql"

"fmt"

"sync"

"github.com/pingcap-inc/tidb-example-golang/util"

_ "github.com/go-sql-driver/mysql"

)

func main() {

openDB("mysql", "root:@tcp(127.0.0.1:4000)/test", func(db *sql.DB) {

writeSkew(db)

})

}

func openDB(driverName, dataSourceName string, runnable func(db *sql.DB)) {

db, err := sql.Open(driverName, dataSourceName)

if err != nil {

panic(err)

}

defer db.Close()

runnable(db)

}

func writeSkew(db *sql.DB) {

err := prepareData(db)

if err != nil {

panic(err)

}

waitingChan, waitGroup := make(chan bool), sync.WaitGroup{}

waitGroup.Add(1)

go func() {

defer waitGroup.Done()

err = askForLeave(db, waitingChan, 1, 1)

if err != nil {

panic(err)

}

}()

waitGroup.Add(1)

go func() {

defer waitGroup.Done()

err = askForLeave(db, waitingChan, 2, 2)

if err != nil {

panic(err)

}

}()

waitGroup.Wait()

}

func askForLeave(db *sql.DB, waitingChan chan bool, goroutineID, doctorID int) error {

txnComment := fmt.Sprintf("/* txn %d */ ", goroutineID)

if goroutineID != 1 {

txnComment = "\t" + txnComment

}

txn, err := util.TiDBSqlBegin(db, true)

if err != nil {

return err

}

fmt.Println(txnComment + "start txn")

// Txn 1 should be waiting until txn 2 is done.

if goroutineID == 1 {

<-waitingChan

}

txnFunc := func() error {

queryCurrentOnCall := "SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = ? AND `shift_id` = ?"

rows, err := txn.Query(queryCurrentOnCall, true, 123)

if err != nil {

return err

}

defer rows.Close()

fmt.Println(txnComment + queryCurrentOnCall + " successful")

count := 0

if rows.Next() {

err = rows.Scan(&count)

if err != nil {

return err

}

}

rows.Close()

if count < 2 {

return fmt.Errorf("at least one doctor is on call")

}

shift := "UPDATE `doctors` SET `on_call` = ? WHERE `id` = ? AND `shift_id` = ?"

_, err = txn.Exec(shift, false, doctorID, 123)

if err == nil {

fmt.Println(txnComment + shift + " successful")

}

return err

}

err = txnFunc()

if err == nil {

txn.Commit()

fmt.Println("[runTxn] commit success")

} else {

txn.Rollback()

fmt.Printf("[runTxn] got an error, rollback: %+v\n", err)

}

// Txn 2 is done. Let txn 1 run again.

if goroutineID == 2 {

waitingChan <- true

}

return nil

}

func prepareData(db *sql.DB) error {

err := createDoctorTable(db)

if err != nil {

return err

}

err = createDoctor(db, 1, "Alice", true, 123)

if err != nil {

return err

}

err = createDoctor(db, 2, "Bob", true, 123)

if err != nil {

return err

}

err = createDoctor(db, 3, "Carol", false, 123)

if err != nil {

return err

}

return nil

}

func createDoctorTable(db *sql.DB) error {

_, err := db.Exec("CREATE TABLE IF NOT EXISTS `doctors` (" +

" `id` int(11) NOT NULL," +

" `name` varchar(255) DEFAULT NULL," +

" `on_call` tinyint(1) DEFAULT NULL," +

" `shift_id` int(11) DEFAULT NULL," +

" PRIMARY KEY (`id`)," +

" KEY `idx_shift_id` (`shift_id`)" +

" ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin")

return err

}

func createDoctor(db *sql.DB, id int, name string, onCall bool, shiftID int) error {

_, err := db.Exec("INSERT INTO `doctors` (`id`, `name`, `on_call`, `shift_id`) VALUES (?, ?, ?, ?)",

id, name, onCall, shiftID)

return err

}

SQL log:

/* txn 1 */ BEGIN

/* txn 2 */ BEGIN

/* txn 2 */ SELECT COUNT(*) as `count` FROM `doctors` WHERE `on_call` = 1 AND `shift_id` = 123

/* txn 2 */ UPDATE `doctors` SET `on_call` = 0 WHERE `id` = 2 AND `shift_id` = 123

/* txn 2 */ COMMIT

/* txn 1 */ SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = 1 and `shift_id` = 123

/* txn 1 */ UPDATE `doctors` SET `on_call` = 0 WHERE `id` = 1 AND `shift_id` = 123

/* txn 1 */ COMMIT

Running result:

mysql> SELECT * FROM doctors;

+----+-------+---------+----------+

| id | name | on_call | shift_id |

+----+-------+---------+----------+

| 1 | Alice | 0 | 123 |

| 2 | Bob | 0 | 123 |

| 3 | Carol | 0 | 123 |

+----+-------+---------+----------+

In both transactions, the application first checks if two or more doctors are on call; if so, it assumes that one doctor can safely take leave. Since the database uses the snapshot isolation, both checks return 2, so both transactions move on to the next stage. Alice updates her record to be off duty, and so does Bob. Both transactions are successfully committed. Now there are no doctors on duty which violates the requirement that at least one doctor should be on call. The following diagram (quoted from Designing Data-Intensive Applications) illustrates what actually happens.

Now let's change the sample program to use SELECT FOR UPDATE to avoid the write skew problem:

- Java

- Golang

package com.pingcap.txn.write.skew;

import com.zaxxer.hikari.HikariDataSource;

import java.sql.Connection;

import java.sql.PreparedStatement;

import java.sql.ResultSet;

import java.sql.SQLException;

import java.util.concurrent.CountDownLatch;

import java.util.concurrent.ExecutorService;

import java.util.concurrent.Executors;

import java.util.concurrent.Semaphore;

public class EffectWriteSkew {

public static void main(String[] args) throws SQLException, InterruptedException {

HikariDataSource ds = new HikariDataSource();

ds.setJdbcUrl("jdbc:mysql://localhost:4000/test?useServerPrepStmts=true&cachePrepStmts=true");

ds.setUsername("root");

// prepare data

Connection connection = ds.getConnection();

createDoctorTable(connection);

createDoctor(connection, 1, "Alice", true, 123);

createDoctor(connection, 2, "Bob", true, 123);

createDoctor(connection, 3, "Carol", false, 123);

Semaphore txn1Pass = new Semaphore(0);

CountDownLatch countDownLatch = new CountDownLatch(2);

ExecutorService threadPool = Executors.newFixedThreadPool(2);

threadPool.execute(() -> {

askForLeave(ds, txn1Pass, 1, 1);

countDownLatch.countDown();

});

threadPool.execute(() -> {

askForLeave(ds, txn1Pass, 2, 2);

countDownLatch.countDown();

});

countDownLatch.await();

}

public static void createDoctorTable(Connection connection) throws SQLException {

connection.createStatement().executeUpdate("CREATE TABLE `doctors` (" +

" `id` int(11) NOT NULL," +

" `name` varchar(255) DEFAULT NULL," +

" `on_call` tinyint(1) DEFAULT NULL," +

" `shift_id` int(11) DEFAULT NULL," +

" PRIMARY KEY (`id`)," +

" KEY `idx_shift_id` (`shift_id`)" +

" ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin");

}

public static void createDoctor(Connection connection, Integer id, String name, Boolean onCall, Integer shiftID) throws SQLException {

PreparedStatement insert = connection.prepareStatement(

"INSERT INTO `doctors` (`id`, `name`, `on_call`, `shift_id`) VALUES (?, ?, ?, ?)");

insert.setInt(1, id);

insert.setString(2, name);

insert.setBoolean(3, onCall);

insert.setInt(4, shiftID);

insert.executeUpdate();

}

public static void askForLeave(HikariDataSource ds, Semaphore txn1Pass, Integer txnID, Integer doctorID) {

try(Connection connection = ds.getConnection()) {

try {

connection.setAutoCommit(false);

String comment = txnID == 2 ? " " : "" + "/* txn #{txn_id} */ ";

connection.createStatement().executeUpdate(comment + "BEGIN");

// Txn 1 should be waiting for txn 2 done

if (txnID == 1) {

txn1Pass.acquire();

}

PreparedStatement currentOnCallQuery = connection.prepareStatement(comment +

"SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = ? AND `shift_id` = ? FOR UPDATE");

currentOnCallQuery.setBoolean(1, true);

currentOnCallQuery.setInt(2, 123);

ResultSet res = currentOnCallQuery.executeQuery();

if (!res.next()) {

throw new RuntimeException("error query");

} else {

int count = res.getInt("count");

if (count >= 2) {

// If current on-call doctor has 2 or more, this doctor can leave

PreparedStatement insert = connection.prepareStatement( comment +

"UPDATE `doctors` SET `on_call` = ? WHERE `id` = ? AND `shift_id` = ?");

insert.setBoolean(1, false);

insert.setInt(2, doctorID);

insert.setInt(3, 123);

insert.executeUpdate();

connection.commit();

} else {

throw new RuntimeException("At least one doctor is on call");

}

}

// Txn 2 done, let txn 1 run again

if (txnID == 2) {

txn1Pass.release();

}

} catch (Exception e) {

// If got any error, you should roll back, data is priceless

connection.rollback();

e.printStackTrace();

}

} catch (SQLException e) {

e.printStackTrace();

}

}

}

package main

import (

"database/sql"

"fmt"

"sync"

"github.com/pingcap-inc/tidb-example-golang/util"

_ "github.com/go-sql-driver/mysql"

)

func main() {

openDB("mysql", "root:@tcp(127.0.0.1:4000)/test", func(db *sql.DB) {

writeSkew(db)

})

}

func openDB(driverName, dataSourceName string, runnable func(db *sql.DB)) {

db, err := sql.Open(driverName, dataSourceName)

if err != nil {

panic(err)

}

defer db.Close()

runnable(db)

}

func writeSkew(db *sql.DB) {

err := prepareData(db)

if err != nil {

panic(err)

}

waitingChan, waitGroup := make(chan bool), sync.WaitGroup{}

waitGroup.Add(1)

go func() {

defer waitGroup.Done()

err = askForLeave(db, waitingChan, 1, 1)

if err != nil {

panic(err)

}

}()

waitGroup.Add(1)

go func() {

defer waitGroup.Done()

err = askForLeave(db, waitingChan, 2, 2)

if err != nil {

panic(err)

}

}()

waitGroup.Wait()

}

func askForLeave(db *sql.DB, waitingChan chan bool, goroutineID, doctorID int) error {

txnComment := fmt.Sprintf("/* txn %d */ ", goroutineID)

if goroutineID != 1 {

txnComment = "\t" + txnComment

}

txn, err := util.TiDBSqlBegin(db, true)

if err != nil {

return err

}

fmt.Println(txnComment + "start txn")

// Txn 1 should be waiting until txn 2 is done.

if goroutineID == 1 {

<-waitingChan

}

txnFunc := func() error {

queryCurrentOnCall := "SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = ? AND `shift_id` = ?"

rows, err := txn.Query(queryCurrentOnCall, true, 123)

if err != nil {

return err

}

defer rows.Close()

fmt.Println(txnComment + queryCurrentOnCall + " successful")

count := 0

if rows.Next() {

err = rows.Scan(&count)

if err != nil {

return err

}

}

rows.Close()

if count < 2 {

return fmt.Errorf("at least one doctor is on call")

}

shift := "UPDATE `doctors` SET `on_call` = ? WHERE `id` = ? AND `shift_id` = ?"

_, err = txn.Exec(shift, false, doctorID, 123)

if err == nil {

fmt.Println(txnComment + shift + " successful")

}

return err

}

err = txnFunc()

if err == nil {

txn.Commit()

fmt.Println("[runTxn] commit success")

} else {

txn.Rollback()

fmt.Printf("[runTxn] got an error, rollback: %+v\n", err)

}

// Txn 2 is done. Let txn 1 run again.

if goroutineID == 2 {

waitingChan <- true

}

return nil

}

func prepareData(db *sql.DB) error {

err := createDoctorTable(db)

if err != nil {

return err

}

err = createDoctor(db, 1, "Alice", true, 123)

if err != nil {

return err

}

err = createDoctor(db, 2, "Bob", true, 123)

if err != nil {

return err

}

err = createDoctor(db, 3, "Carol", false, 123)

if err != nil {

return err

}

return nil

}

func createDoctorTable(db *sql.DB) error {

_, err := db.Exec("CREATE TABLE IF NOT EXISTS `doctors` (" +

" `id` int(11) NOT NULL," +

" `name` varchar(255) DEFAULT NULL," +

" `on_call` tinyint(1) DEFAULT NULL," +

" `shift_id` int(11) DEFAULT NULL," +

" PRIMARY KEY (`id`)," +

" KEY `idx_shift_id` (`shift_id`)" +

" ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin")

return err

}

func createDoctor(db *sql.DB, id int, name string, onCall bool, shiftID int) error {

_, err := db.Exec("INSERT INTO `doctors` (`id`, `name`, `on_call`, `shift_id`) VALUES (?, ?, ?, ?)",

id, name, onCall, shiftID)

return err

}

SQL log:

/* txn 1 */ BEGIN

/* txn 2 */ BEGIN

/* txn 2 */ SELECT COUNT(*) AS `count` FROM `doctors` WHERE on_call = 1 AND `shift_id` = 123 FOR UPDATE

/* txn 2 */ UPDATE `doctors` SET on_call = 0 WHERE `id` = 2 AND `shift_id` = 123

/* txn 2 */ COMMIT

/* txn 1 */ SELECT COUNT(*) AS `count` FROM `doctors` WHERE `on_call` = 1 FOR UPDATE

At least one doctor is on call

/* txn 1 */ ROLLBACK

Running result:

mysql> SELECT * FROM doctors;

+----+-------+---------+----------+

| id | name | on_call | shift_id |

+----+-------+---------+----------+

| 1 | Alice | 1 | 123 |

| 2 | Bob | 0 | 123 |

| 3 | Carol | 0 | 123 |

+----+-------+---------+----------+

savepoint and nested transactions are not supported

TiDB does NOT support the savepoint mechanism and therefore does not support the PROPAGATION_NESTED propagation behavior. If your applications are based on the Java Spring framework that use the PROPAGATION_NESTED propagation behavior, you need to adapt it on the application side to remove the logic for nested transactions.

The PROPAGATION_NESTED propagation behavior supported by Spring triggers a nested transaction, which is a child transaction that is started independently of the current transaction. A savepoint is recorded when the nested transaction starts. If the nested transaction fails, the transaction will roll back to the savepoint state. The nested transaction is part of the outer transaction and will be committed together with the outer transaction.

The following example demonstrates the savepoint mechanism:

mysql> BEGIN;

mysql> INSERT INTO T2 VALUES(100);

mysql> SAVEPOINT svp1;

mysql> INSERT INTO T2 VALUES(200);

mysql> ROLLBACK TO SAVEPOINT svp1;

mysql> RELEASE SAVEPOINT svp1;

mysql> COMMIT;

mysql> SELECT * FROM T2;

+------+

| ID |

+------+

| 100 |

+------+

Large transaction restrictions

The basic principle is to limit the size of the transaction. At the KV level, TiDB has a restriction on the size of a single transaction. At the SQL level, one row of data is mapped to one KV entry, and each additional index will add one KV entry. The restriction is as follows at the SQL level:

- The maximum single row record size is

120 MB. You can configure it byperformance.txn-entry-size-limitfor TiDB v5.0 and later versions. The value is6 MBfor earlier versions. - The maximum single transaction size supported is

10 GB. You can configure it byperformance.txn-total-size-limitfor TiDB v4.0 and later versions. The value is100 MBfor earlier versions.

Note that for both the size restrictions and row restrictions, you should also consider the overhead of encoding and additional keys for the transaction during the transaction execution. To achieve optimal performance, it is recommended to write one transaction every 100 ~ 500 rows.

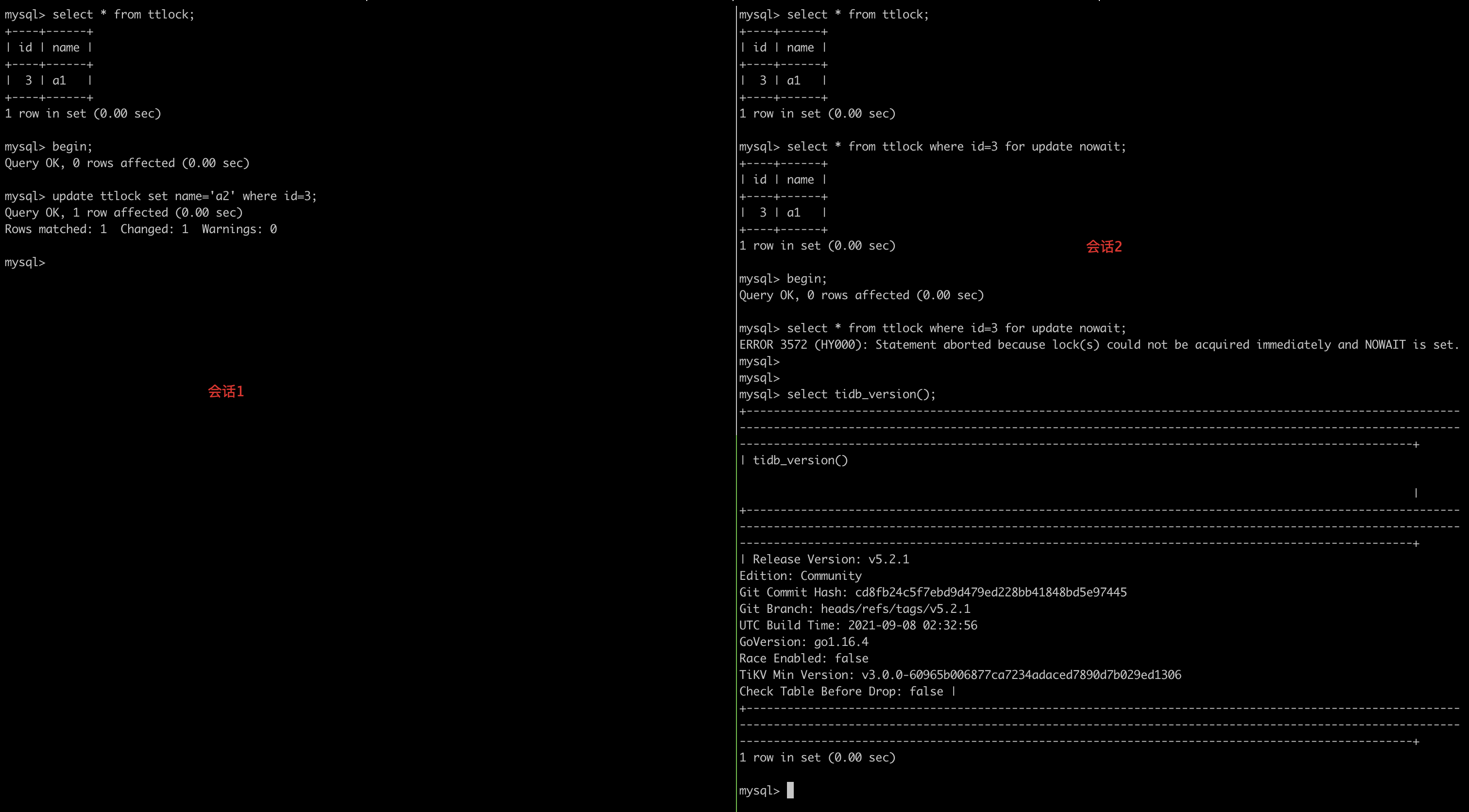

Auto-committed SELECT FOR UPDATE statements do NOT wait for locks

Currently locks are not added to auto-committed SELECT FOR UPDATE statements. The effect is shown in the following figure:

This is a known incompatibility issue with MySQL. You can solve this issue by using the explicit BEGIN;COMMIT; statements.